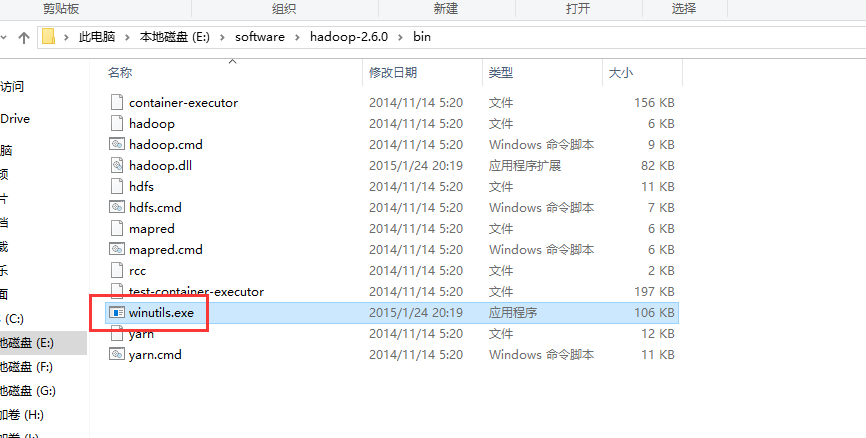

For testing if Spark is working or not, you can run the example from the bin folder of Spark Sets Authentication Azure CLI Build Azure VM Azure Data Lake Store. All processes still must have access to the native components: hadoop.dll and winutils.exe. Use the below command to grant the privileges.ĭ:\spark>D:\winutils\winutils.exe chmod 777 D:\tmp\hiveġ0. We have reinstated support for launching Hadoop processes on Windows by using Cygwin to run the shell scripts. place the elusive winutils.exe under the plugin folder and point HADOOPHOME. \tmp\hive directory on HDFS should be writable. The HDFS snapshot/restore plugin is built against the latest Apache Hadoop. Grant permissions to the folder C:\tmp\hive if you get any permissions error. Set PATH environment variable to include %HADOOP_HOME%\bin as followsĩ.

WINUTILS EXE HADOOP S INSTALL

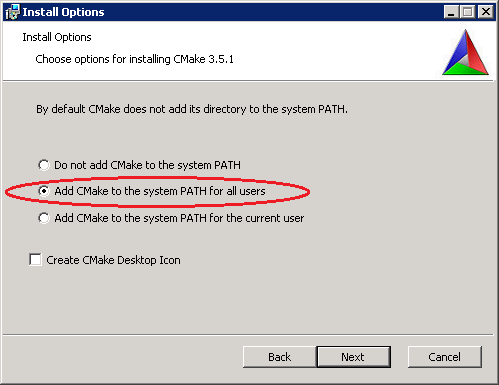

For example, if you install winutils.exe in D:\winutils\bin\winutils.exe directory then set the path to Set HADOOP_HOME to the path of winutils.exe. So to overcome this error, download winutils.exe and place it in any location.(for example,)Ĩ. Though we aren’t using Hadoop with Spark, but somewhere it checks for HADOOP_HOME variable in configuration. For example, if you have downloaded Spark to C:\Spark\spark-1.6.1-bin-hadoop2.6 directory then set Set SPARK_HOME and PATH environment variable to the downloaded spark folder. Lets choose a Spark prebuilt package for Hadoop from here.

WINUTILS EXE HADOOP S CODE

Scala code runner version 2.11.8 - Copyright 2002-2016, LAMP/EPFL Picked up _JAVA_OPTIONS: -Xmx512M -Xms512M To check if Scala is working or not, run following command. HADOOP-11003 .Shell should not take a dependency on binaries being deployed when used as a library Resolved HADOOP-10775 Shell operations to fail with meaningful errors on windows if winutils. For example, if you have downloaded Scala in C:\scala directory then setĪlso, you can set _JAVA_OPTIONS environment variable to the value mentioned below to avoid any Java Heap Memory problems you encounter, if any.ĥ. Set the environment variables, SCALA_HOME to reflect the bin folder of downloaded scala directory.

Choose the first option of "Download Scala x.y.z. Set JAVA_HOME and PATH variables as environment variables.ģ. If not present, download Java from here.Ģ. Java is a prerequisite for running Apache Spark. Here are the steps to install and run Apache Spark on Windows in standalone mode.ġ. If you do not want to run Apache Spark on Hadoop, then standalone mode is what you are looking for.